The Tourism of Tech Worship

Wall Street analysts love a good field trip. They put on high-visibility vests, walk through echoing halls of server racks, and marvel at the blue LEDs as if they’ve discovered the source code of the universe. They come back and write glowing reports about "secular tailwinds" and the "physicality of compute."

They are being sold a curated museum tour.

The standard narrative—the one clogging your feed right now—claims that the more steel we put in the ground and the more GPUs we cram into racks, the more certain the AI windfall becomes. It treats data centers like digital oil wells. Buy the land, buy the chips, pump the profit.

This logic is a trap. It ignores the brutal reality of hardware depreciation, the looming "Power Wall," and the fact that we are currently building the world’s most expensive collection of soon-to-be paperweights.

The GPU Obsolescence Death Spiral

Investors are cheering for massive capital expenditure. They see a $100 billion build-out and think "growth." They should think "burn rate."

In the traditional SaaS era, a server was a commodity that lasted five to seven years. In the AI era, the performance leap between chip generations is so aggressive that hardware becomes economically unviable in less than three. If you are building a $5 billion facility around today's flagship silicon, you are racing against a clock that is ticking twice as fast as you realize.

Most analysts focus on "supply chain bottlenecks." They think the risk is not getting enough chips. The real risk is owning too many of the wrong ones. We are seeing a massive "gold rush" where the shovels are made of ice. By the time you reach the mine, the shovel has melted.

I’ve sat in rooms where CFOs realize that the "state-of-the-art" cluster they greenlit eighteen months ago is now a legacy liability that can’t run the latest frontier models at a competitive cost-per-token. They don't put that in the brochures they hand to visiting stock pickers.

The Power Wall and the Myth of Infinite Scale

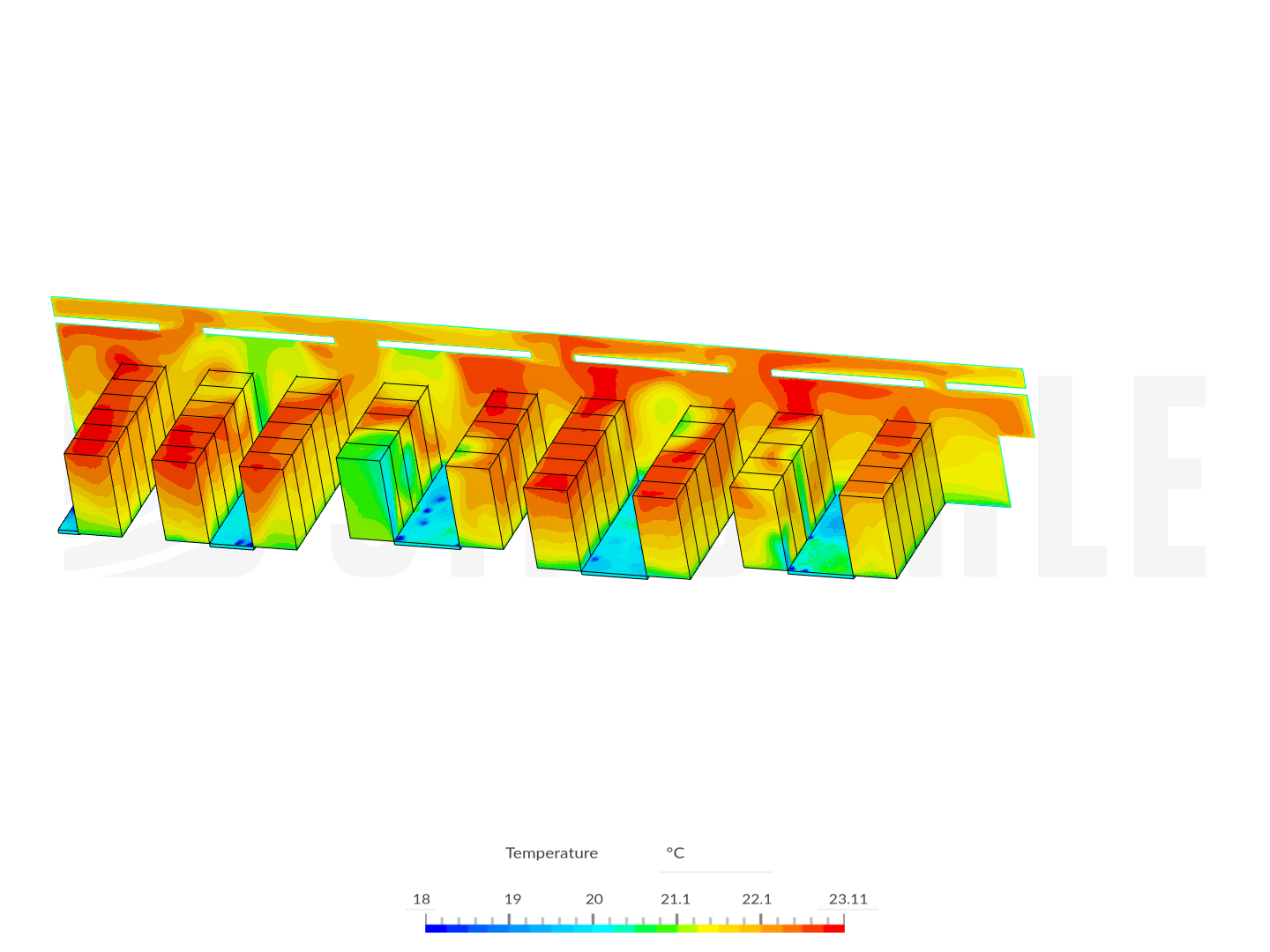

The "tours" always highlight the cooling systems. They show off the liquid cooling manifolds and the massive HVAC units like they are badges of honor. They aren't. They are symptoms of a failing architecture.

We are approaching a physical limit that no amount of venture capital can bypass. A standard rack used to pull 10kW to 15kW. AI racks are pushing 100kW and heading toward 120kW. This isn't just an engineering challenge; it’s a grid-level crisis.

The industry is currently betting that we can continue to centralize compute in massive "megascale" hubs. But the power isn't there. I’ve seen projects delayed by four years because the local utility literally cannot deliver the amperage.

The Real Power Math

- Transmission Loss: You can build a data center in the middle of a desert near a solar farm, but moving that data back to the users introduces latency that kills real-time AI applications.

- Thermal Density: We are hitting the limits of how much heat can be moved out of a physical space before the fans consume more energy than the chips.

- The Grid Gap: We are trying to run a 21st-century intelligence revolution on a 20th-century copper grid.

The contrarian truth? The future isn't bigger data centers. It’s smaller, hyper-efficient, decentralized nodes. The "bigger is better" era of the data center is a bubble fueled by cheap debt and optimistic thermal modeling.

Why Liquid Cooling is a Band-Aid, Not a Cure

The competitor article likely waxed poetic about liquid cooling. It sounds futuristic. In reality, it's a desperate measure. Moving from air to liquid is an admission that our chips are becoming too inefficient.

When you see a facility bragging about its massive water usage or its closed-loop cooling, you’re looking at a company fighting a losing war against the second law of thermodynamics. The entropy always wins.

The winners won't be the companies that build the best radiators. They will be the companies that rethink the architecture of the chip itself to operate at lower voltages. Right now, we are just overclocking our way to a margin collapse.

The "Zombie" Data Center Phenomenon

Here is a term you won’t hear on an earnings call: Zombie Clusters.

These are facilities filled with H100s or previous-generation hardware that are technically functional but economically dead. They are too expensive to run compared to the newest silicon, but too new to write off without tanking the balance sheet.

I’ve seen facilities where 30% of the floor space is dedicated to hardware that is "available" but rarely scheduled because the power cost to run it exceeds the revenue it generates. They keep the lights on for the tours, but the fans are silent.

As the "Inference Era" takes over from the "Training Era," this problem will explode. Training requires raw, brutal power. Inference requires efficiency and proximity. Most of the data centers being built today are designed for the former and will be disastrously poorly suited for the latter.

Follow the Copper, Not the Hype

If you want to know who is actually winning, stop looking at the logo on the building and start looking at the transformer yard.

The real bottleneck isn't GPUs. It isn't even "AI talent." It's specialized high-voltage electrical equipment. The lead times for the transformers required to power these "stock name" facilities are now measured in years, not months.

The companies winning aren't the ones with the best AI models; they are the ones who secured the rights to the electricity in 2019. Everyone else is just LARPing as a tech titan while waiting for a utility company to return their calls.

The Efficiency Paradox (Jevons Paradox) in Compute

There is a common belief that as AI chips get more efficient, data center energy needs will go down. This is the Jevons Paradox in action: as a resource becomes more efficient to use, the total consumption of that resource increases rather than decreases.

Increased efficiency leads to more demand, which leads to more centers, which leads to a greater strain on the grid. We aren't building a more sustainable future; we are building a more fragile one.

The "tour" you read about didn't mention the environmental debt being accrued. They didn't mention that a single large-scale training run can consume the same amount of power as a small city for a month. They show you the polished floor, not the carbon footprint or the strained local infrastructure.

Stop Asking if the Facilities Work

Of course they "work." They are engineering marvels. But a Ferrari works perfectly while driving off a cliff.

The question isn't whether these facilities can process data. The question is whether the economic model behind them can survive a 36-month hardware refresh cycle and a global energy shortage.

The current data center gold rush is built on the assumption that demand for compute is infinite and price-inelastic. It isn't. When the "AI hype" meets the "Economic Reality," the first thing to get cut will be the massive cloud bills that fund these monuments to silicon.

The Actionable Pivot

If you are an investor or a leader, stop looking at "Data Center REITs" as a safe play.

- Audit the Thermal Ceiling: If a facility isn't designed for 100kW+ racks today, it’s a legacy asset tomorrow.

- Verify Power Purity: Not just "renewables," but firm, base-load power. Intermittent solar doesn't run a 24/7 training cluster.

- Watch the Interconnects: The building is just a shell. The value is in the proprietary networking fabric that allows 50,000 GPUs to act as one. If they are using off-the-shelf networking, they have no moat.

The industry isn't "evolving." It's cannibalizing itself. The very facilities being touted as the backbone of the future are often the biggest anchors dragging down the balance sheets of the present.

Stop buying the tour. Start looking at the power bill.