The alliance between Redmond and the high-stakes world of defense contracting just hit a legal tripwire. Microsoft is moving to freeze a Pentagon blacklisting of Anthropic, filing for a temporary restraining order that aims to preserve its multi-billion-dollar push into the federal "secure cloud" market. The move is a desperate play for time. By shielding Anthropic from a sudden exclusionary mandate, Microsoft isn’t just protecting a partner; it is defending the structural integrity of its own defense-sector roadmap.

The core of the dispute rests on a sudden shift in how the Department of Defense (DoD) evaluates the safety and "sovereign risk" of large language models. Historically, Microsoft has acted as the gatekeeper for these technologies within government circles. But a recent, quiet determination from the Pentagon’s vetting arm suggested that Anthropic’s underlying architecture—despite its "Constitutional AI" marketing—might harbor vulnerabilities or ownership ties that do not align with the strictest military requirements. Microsoft’s legal team is now arguing that a blacklist at this stage would cause irreparable harm to existing contracts and national security interests.

The Infrastructure Trap

Defense contracts are not built on software alone. They are built on deeply integrated infrastructure. When Microsoft integrated Claude—Anthropic’s flagship model—into its Azure Government Secret cloud, it wasn't just adding a feature. It was weaving a specific logic into the fabric of military intelligence processing.

If the Pentagon pulls the plug now, the fallout isn't a simple "uninstall." It’s a systemic failure. Entire workflows designed to parse signals intelligence or automate logistical supply chains would go dark. Microsoft’s argument for a temporary restraining order hinges on this technical reality. They are telling the courts that the government cannot turn off a switch when that switch is wired into the very foundation of the building.

The risk for Microsoft is purely financial, yet they are framing it as a matter of tactical readiness. This is the classic defense-contractor playbook. By making the technology "too integrated to fail," they force the hand of the regulators. If the restraining order is granted, it gives Microsoft months to lobby, refine the code, or restructure the ownership stakes that the Pentagon currently finds distasteful.

The Quiet Conflict Over Sovereign AI

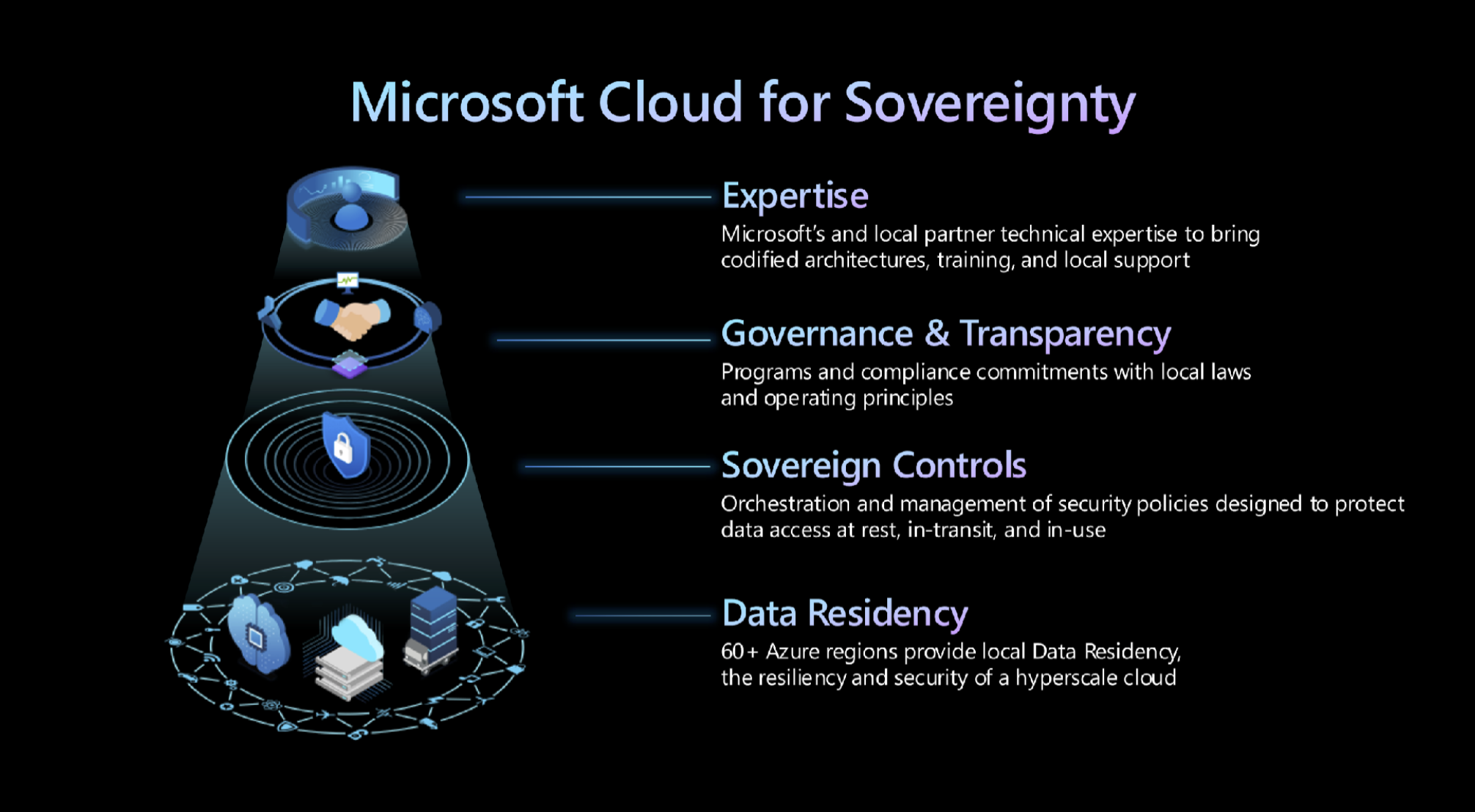

Why Anthropic? Why now? The Pentagon is currently obsessed with "Sovereign AI." This isn't about where the company is headquartered, but where the data lives, how the weights are trained, and whether a foreign adversary could theoretically trigger a "sleeper" behavior within the model.

The military isn't worried about Claude being "woke" or "unhelpful." They are worried about it being compromised. There is a lingering suspicion within certain factions of the DoD that the rapid-fire scaling of these models has outpaced our ability to audit them for backdoors. Anthropic, which has taken significant investment from various global sources, is being viewed through a lens of extreme skepticism.

Microsoft, meanwhile, sees this skepticism as an existential threat to its Azure dominance. If the Pentagon can arbitrarily blacklist a model that Microsoft has spent hundreds of millions to host and secure, then the "Azure" brand loses its primary selling point: certainty.

The Problem with Constitutional AI in Combat

Anthropic markets its models as being governed by a "constitution"—a set of rules the model must follow during training to ensure it remains helpful and harmless. To a civilian developer, this sounds like a safety feature. To a military commander, it looks like a potential point of failure.

- Rule Conflict: If a model’s "constitution" forbids it from assisting in lethal operations, it becomes a paperweight in a tactical environment.

- Hidden Biases: The "values" baked into the model are subjective. The Pentagon wants models that follow orders, not a private company’s ethical code.

- Audit Gaps: Microsoft’s lawyers are fighting because the Pentagon hasn't shared the specific "test results" that led to the blacklist.

The lack of transparency is the sharpest tool in Microsoft's legal kit. They are claiming that the government is acting "arbitrarily and capriciously"—a specific legal standard required to overturn agency decisions. They want the Pentagon to show their work. If the DoD cannot prove that Anthropic is a security risk, the blacklist cannot stand.

The Shadow of the JEDI Contract

Every person in the Microsoft legal department remembers the JEDI (Joint Enterprise Defense Infrastructure) debacle. That was a decade-long war over a single $10 billion cloud contract that ultimately went nowhere because of legal infighting and political interference.

This current battle is JEDI 2.0, but with higher stakes. In the JEDI era, the fight was over where data was stored. Now, the fight is over the intelligence that processes that data. If Microsoft loses the ability to offer a variety of "best-in-class" models like Claude, they become a utility provider rather than an intelligence partner.

The Pentagon, for its part, is trying to avoid vendor lock-in. By blacklisting Anthropic, they are effectively telling Microsoft: "We don't care if it's on your cloud; we don't trust the engine." This is a direct shot at the "walled garden" strategy Microsoft has spent years cultivating.

The Technical Reality of a Restraining Order

What happens if the court says yes? A temporary restraining order (TRO) would force the Pentagon to keep the Anthropic models active on the networks for a set period—usually 14 to 28 days—while a more permanent injunction is debated.

During this window, Microsoft will attempt to "sanitize" the issue. This could involve:

- Air-gapping specific Anthropic instances so they have zero connection to the outside world.

- Hard-coding overrides that bypass the model's "constitutions" for specific military tasks.

- Restructuring the financial data-sharing agreements between Redmond and Anthropic.

This is a high-wire act. If Microsoft pushes too hard, they risk alienating the very generals who sign the checks. But if they don't fight, they concede that the Pentagon, not Microsoft, dictates the roadmap for Azure.

The Real Winner Might Be Open Source

While Microsoft and the Pentagon trade legal blows over proprietary models, a quiet shift is happening in the lower ranks of the defense community. Open-source models—where the code and weights are fully transparent—are becoming increasingly attractive.

The Pentagon's problem with Anthropic is that Claude is a "black box." They can't see why it makes certain decisions. With open-source alternatives, the military can conduct its own audits without asking for permission or waiting for a legal discovery process. Microsoft’s insistence on proprietary partnerships might be their greatest strategic blunder. They are fighting for the right to sell a product that the customer is increasingly afraid to use.

The litigation in D.C. district court will likely hinge on whether Microsoft can prove "irreparable harm." In the world of enterprise software, that's usually code for "lost revenue." But in the world of defense, Microsoft is betting that the court will see the potential for a "technological gap" as a threat to the nation itself.

The irony is thick. Anthropic was founded on the principle of safety and avoiding the "AI arms race." Now, it is the primary casualty in a legal war to ensure that very arms race continues unabated on the taxpayer’s dime.

The Strategy of Forced Compliance

Microsoft's legal filing reveals a broader strategy. They aren't just looking for a "yes" from the court; they are looking for a seat at the table where the rules for AI vetting are written. Right now, the Pentagon’s vetting process is a mystery. There is no clear rubric. No pass/fail grade that a company can study for.

By filing this suit, Microsoft is attempting to force the Department of Defense to codify its AI standards. If the standards are public, Microsoft can build to them. If the standards remain secret, the Pentagon can kill any project at any time for any reason.

This isn't just about Anthropic. It's about who owns the "brain" of the modern military. If Microsoft wins this TRO, they prove that the tech giants have enough gravity to pull the Department of Defense out of its own orbit. If they lose, it marks the beginning of a new era where "Big Tech" is treated with the same suspicion as a foreign power.

Analyze the filing carefully and you see the underlying fear: if Anthropic is gone today, is OpenAI next? Microsoft has hitched its wagon to external model providers. If those providers are deemed "security risks," Microsoft's entire federal cloud strategy is nothing but empty server racks.

Check the court docket for the hearing date on the motion for the preliminary injunction. If the judge grants it, expect a flurry of "security updates" from Anthropic that look remarkably like the concessions the Pentagon has been quietly demanding for months.