China is flooding the market with bipedal machines that can walk, dance, and even perform tai chi, but they're still missing the "brain" that makes them actually useful. You’ve seen the videos from the World Robot Conference in Beijing. Dozens of shiny, metallic figures standing in rows, mimicking human gestures with eerie precision. It looks like the future has arrived. It hasn’t. Despite the massive subsidies and the sheer speed of Chinese manufacturing, these bots are largely hardware-heavy and software-light. They're waiting for a breakthrough in embodied AI that hasn't happened yet.

The obsession with the "ChatGPT moment" for robotics is everywhere in Shenzhen and Hangzhou. It refers to that specific point where a technology jumps from a quirky lab experiment to a tool that everyone can use. For LLMs, that happened when OpenAI figured out how to make a chatbot actually understand intent. For humanoid robots, that moment requires more than just processing text. It requires a robot to see a messy kitchen, understand what a "dirty plate" is, and figure out the physics of picking it up without shattering it. China is world-class at building the plate-spinner, but they're still struggling to write the code for the juggler’s mind. Meanwhile, you can find similar events here: The Logistics of Electrification Uber and the Infrastructure Gap.

The Hardware Trap and the Silicon Valley Gap

Chinese firms like Unitree, UBTECH, and Fourier Intelligence are incredibly good at lowering costs. You can now buy a humanoid robot from Unitree for about $16,000. That’s a staggering price point when you consider that Boston Dynamics’ Atlas or Tesla’s Optimus are expected to cost significantly more. But hardware is only half the battle. If you have a Ferrari body with a lawnmower engine, you aren't winning any races.

The real bottleneck is General World Models. This is the tech that allows a robot to predict what happens next in the physical world. While US-based firms like Figure AI and Physical Intelligence are pouring billions into "end-to-end" neural networks, many Chinese players are still relying on older, rule-based programming for specific movements. It's the difference between a robot that knows how to walk on a flat floor because it was told exactly where to put its feet, and a robot that learns to walk by "feeling" the ground. To understand the bigger picture, check out the recent report by Gizmodo.

Beijing is throwing money at this. The Ministry of Industry and Information Technology wants mass production by 2025. They’ve designated humanoid robots as a "new productive force." But you can't just mandate an architectural breakthrough in AI. You need data. Specifically, you need "video-to-action" data. This is where the gap lives. China has the factories to build the shells, but the high-end GPU clusters and the massive datasets required to train these physical brains are still concentrated in the West, partly due to ongoing chip export restrictions.

Why Dexterity Is Harder Than Poetry

Writing a poem about a sunset is easy for an AI. Picking up a strawberry without crushing it is a nightmare. This is Moravec’s Paradox. High-level reasoning requires very little computation, but low-level sensorimotor skills require enormous computational resources.

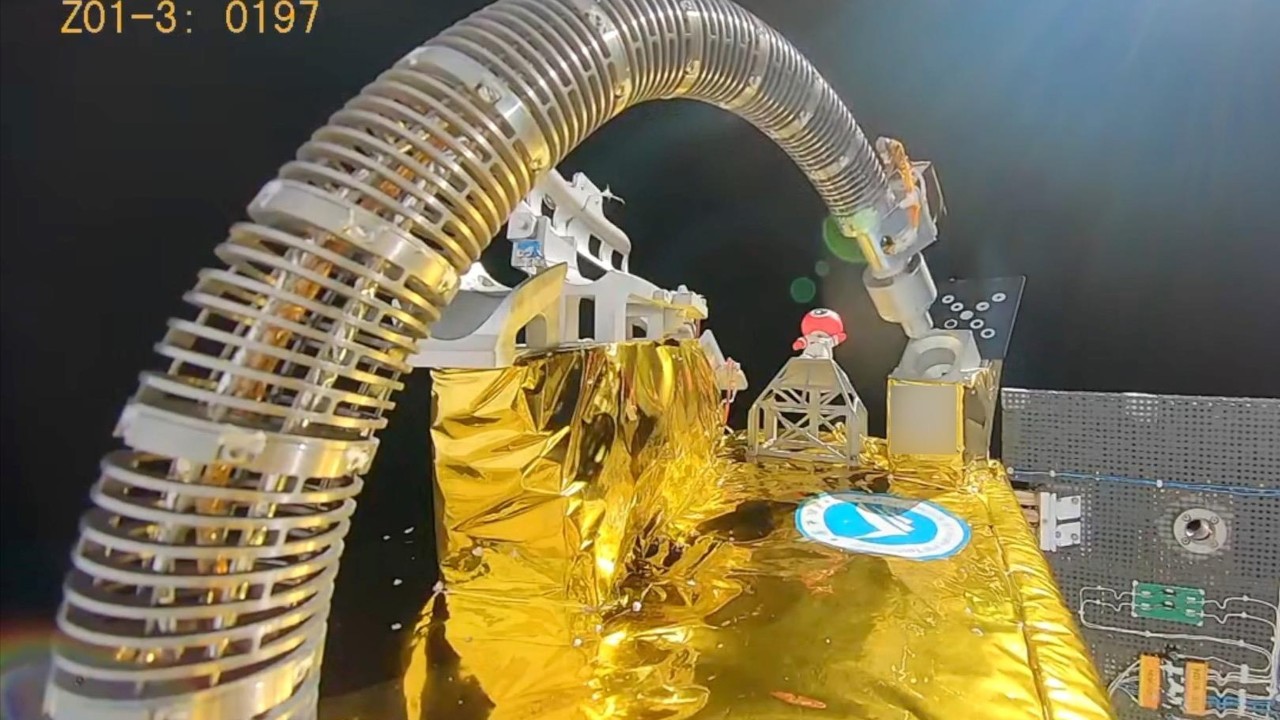

Chinese engineers are great at the mechanical side. They’ve mastered high-torque density motors and harmonic drive reducers. These are the "muscles" of the robot. If you look at the Fourier GR-1, it has impressive joint movements. But watch it try to navigate an unpredictable environment. It stutters. It hesitates. It lacks the fluid, reactive intelligence that comes from a foundation model trained on millions of hours of physical interaction.

I’ve talked to founders in this space who admit the pressure to "demo" is hurting the long-term R&D. In the Chinese tech ecosystem, if you don't show a cool video every three months, the VC funding dries up. This leads to "over-optimized" demos—robots doing specific tasks in controlled settings that look great on TikTok but fail in a real warehouse. To get to that ChatGPT moment, the industry needs to stop focusing on the dance moves and start focusing on the "unsupervised learning" of physical tasks.

The Data Scarcity Problem

Where does the data come from? For ChatGPT, it was the internet. For a robot, you can't just scrape the web. You need:

- Teleoperation data (humans wearing VR suits to "show" the robot how to move).

- Synthetic data from high-fidelity simulations like NVIDIA’s Isaac Sim.

- Real-world "edge case" data from robots actually failing in the field.

China has a massive advantage in the third category because they have more robots in the wild. But they're lagging in the first two. Teleoperation is slow and expensive. Simulation requires massive GPU power—the very thing that is becoming harder for Chinese firms to acquire in bulk.

The Ghost in the Machine is Still Missing

We're seeing a pivot. Companies like Agility Robotics in the US are already putting their "Digit" bot to work in Amazon warehouses. It doesn't look like a human—it has bird-like legs—but it works. China is still very focused on the humanoid form factor. There’s a cultural drive to make robots look like us, perhaps because of the looming demographic crisis and the need for elder care.

But looking like a human makes the software problem ten times harder. Balance is a constant struggle. If the robot has two legs, it’s always one millisecond away from falling over. If China wants to win this, they might need to stop trying to build "General Purpose" bots for a few years and focus on "Functional Humanoids" that do one thing—like moving boxes—perfectly.

Tesla's Optimus is the elephant in the room. Elon Musk is betting that he can take the "brain" from Full Self-Driving and put it into a bipedal body. Since Tesla has a huge manufacturing footprint in Shanghai, there’s a lot of "cross-pollination" happening. Chinese engineers are watching Tesla closely. The moment Optimus starts doing real work on a factory floor in China, the local ecosystem will iterate on that design at lightning speed. That’s how the Chinese EV industry took over—they watched, they learned, and then they out-manufactured everyone.

Moving Beyond the Hype

Don't let the flashy trade show videos fool you. We're in the "dial-up internet" phase of humanoid robotics. The hardware is basically ready, but the operating system is barely functional. For China to bridge this gap, they have to solve the "sim-to-real" transfer problem. This is the difficulty of taking a skill learned in a computer simulation and making it work in the messy, gravity-filled real world.

If you’re an investor or a tech enthusiast, stop looking at how fast the robot can run. Start looking at how it handles a mistake. If a robot trips, does it catch itself? If a human gets in its way, does it find a new path instantly? Those are the signs of an actual AI brain, not just a pre-programmed script.

The "ChatGPT moment" won't be a single announcement. It'll be the first time a robot can walk into a room it's never seen before and perform a task it wasn't specifically programmed for. China has the industrial muscle to build ten million robots tomorrow. They just don't have anything to tell those ten million robots what to do yet.

To really track this progress, watch the development of "Unified Visual-Motor" models in Chinese research papers. When you start seeing those models integrated into the $16,000 bots from Unitree, that's when the shift happens. Keep an eye on the integration of local LLMs—like those from Baidu or Alibaba—into robotic hardware. That's the most likely path forward. Forget the dancing; wait for the robot that can fold your laundry without being told how.

The next step is to look at the specific sensor suites being used in the newest models. Look for LiDAR-free vision systems. If a robot can navigate using only cameras—the way humans do—it's a sign the software is finally doing the heavy lifting. Focus on the vision-language-action (VLA) models coming out of Tsinghua University and the Beijing Academy of Artificial Intelligence. That's where the real "moment" is being built.